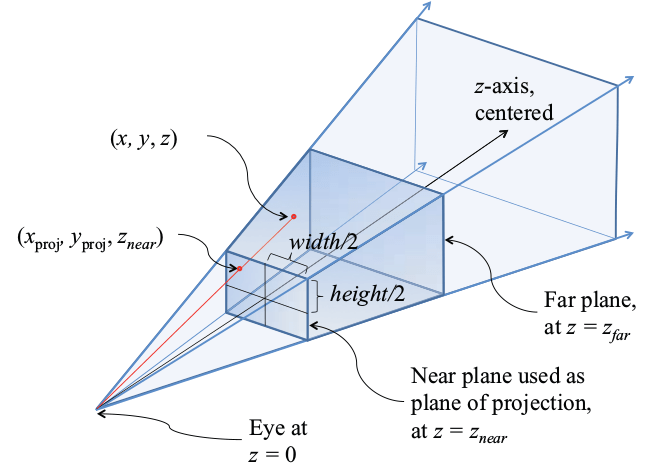

I've been working lately on computer vision projects, involving Tensorflow for deep learning, OpenCV for computer vision and OpenGL for computer graphics. I'm especially interested in hybrid approaches, where I mix deep learning stuff, opencv stuff, and classic OpenGL pipeline. The main idea is to avoid framing problems as black box problems, throw a neural … Continue reading Augmented Reality with OpenCV and OpenGL: the tricky projection matrix

Author: Fruty

Scaling a Software Team: Feedback on Azure DevOps

First of all, let me get this straight: I've never been a big fan of Azure. I'm rather an AWS hardcore fan, love the way it's built, its reliability, and the design focus on infrastructure. But every once in a while, it's good to open your chakras and try new things. I recently worked with … Continue reading Scaling a Software Team: Feedback on Azure DevOps

The Virtues of Low-code Digital Transformation

When we speak of digital transformation, we have 2 kinds of considerations: strategic considerations (how tech can disrupt your business model), and operational considerations (how tech can streamline your operations). Here, we're going to speak about #2. I've been working lately with a large industrial corporation (1000s of employees) on a company-wide digital transformation effort. … Continue reading The Virtues of Low-code Digital Transformation

Building a Scalable React App with Next.js

For those who grew up in the 1990's, modern web development sometimes feels like massive overkill. It's now all about SPAs (single-page applications) with complex javascript frameworks, but let's be honest, does everybody actually need it? From an end-user perspective, for most use cases (corporate front-ends, forums etc), the old paradigm of static pages and … Continue reading Building a Scalable React App with Next.js

Trading Automation with Interactive Broker API, Python and Docker

Crypto trading has been all the rage over the past few years, and people tend to forget that there are many many more trading opportunities in the real world than in the crypto world. Unfortunately, the real world is often using outdated tech and it can be a real pain to finally manage to perform … Continue reading Trading Automation with Interactive Broker API, Python and Docker

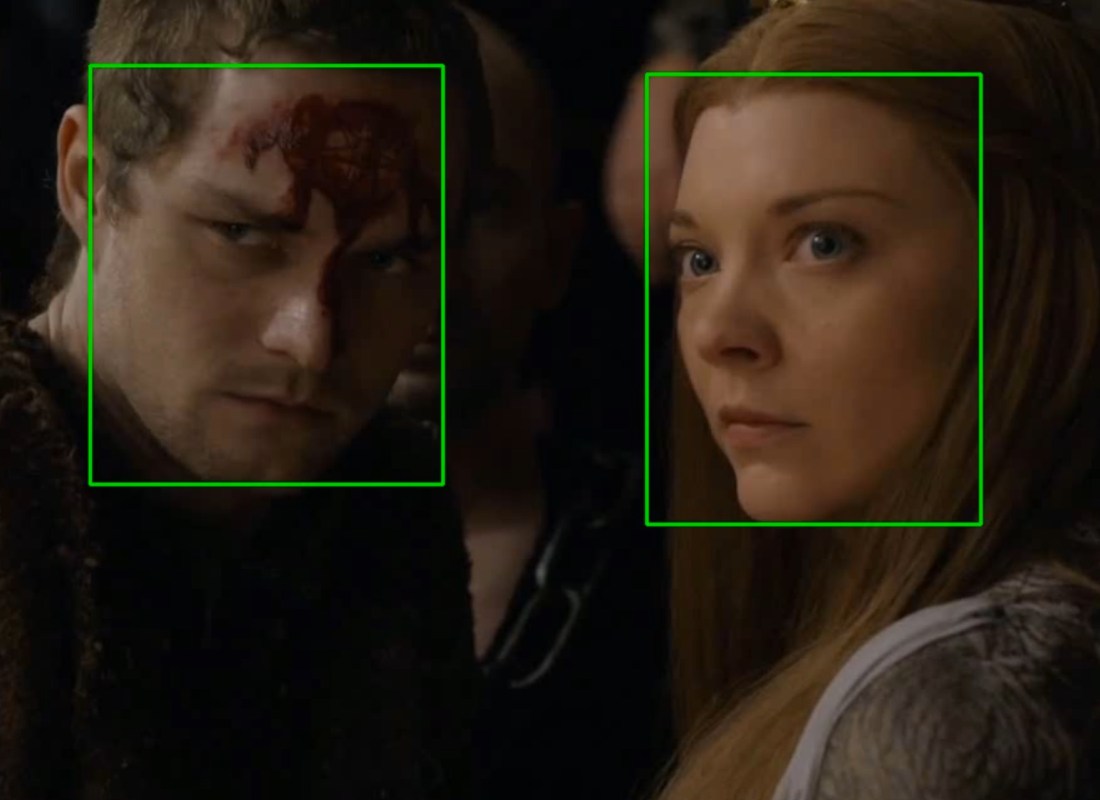

State of the art (2019) face detection with RetinaFace and MXNet

Testing deep learning neural networks on public datasets is fun, but it's usually on unseen data that you can really see how the published techniques really perform. Recently, I was trying to detect human faces on Game of Thrones footage. I was surprised to see that the most widely used techniques didn't fare really well. … Continue reading State of the art (2019) face detection with RetinaFace and MXNet

Excel to Pandas to PostgreSQL: Data Science for Strategy Consulting

I've been lately regularly working with strategy consultancies, on data and AI matters. While corporate ambitions everywhere around data/AI are rising, when it comes to day-to-day operations, most of the data is still fragmented among many databases, and people use Excel sheets as exchange format. This in itself is interesting, because it means a corporate … Continue reading Excel to Pandas to PostgreSQL: Data Science for Strategy Consulting

AWS Outposts, Snowball: Precursors of AWS Region-as-a-service?

AWS recently unveiled Outposts, a way to deploy on-premises servers that blend seamlessly in AWS cloud, and Snowball, a rugged server with a local EC2 and S3, that can be deployed anywhere on the planet even without connectivity. Apart from the engineering achievement, what’s interesting with those 2 announcements is that AWS seems to be … Continue reading AWS Outposts, Snowball: Precursors of AWS Region-as-a-service?

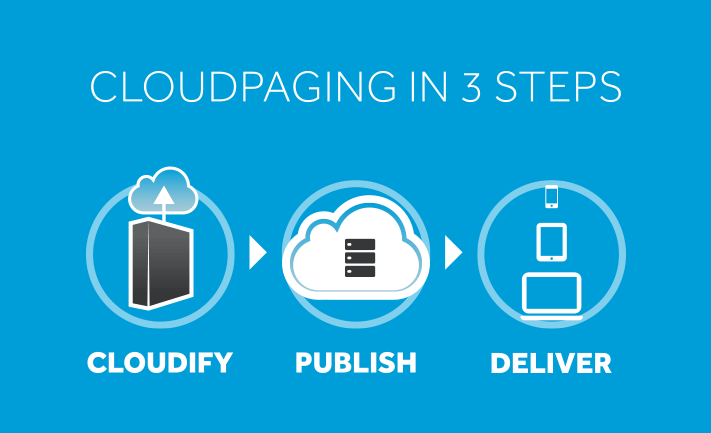

What Happened to Cloud Paging Technology?

Every once in a while, you see an impressive tech arrive on the market. Usually the engineer in me is blown away by its elegance and/or the freshness of the technical approach to a problem. But my inner businessman wonders whether the company founders will manage to propose a compelling business case to their customer … Continue reading What Happened to Cloud Paging Technology?

Spoon: Containerization Before it was Cool, on Windows

A few years ago, I ran a cloud rendering startup that worked with VFX studios. We worked with a few mid-size studios, that used Windows on all their machines. We were faced with the challenge of deploying Windows server farms, and find practical ways to install programmatically heavy software (3dsMax, Maya, V-ray...) on all the … Continue reading Spoon: Containerization Before it was Cool, on Windows